Introduction

Artificial intelligence (AI) is slated to have a profound effect on grassroots advocacy, communication, and democracy at large. As AI technologies like ChatGPT become more advanced, they pose significant threats to the integrity of constituent-to-lawmaker communication, ultimately affecting polling, voting, and democratic processes. This blog post is the first in a series exploring the implications of AI-generated content and how we can address these challenges.

The Problem

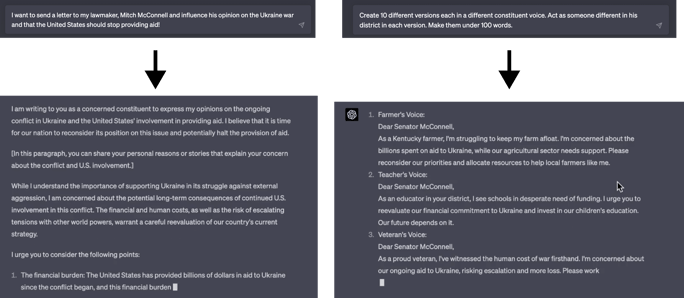

Imagine a world where AI can craft authentic-sounding messages that are nearly impossible to identify as AI-generated. Combine this with the vast amount of personal information available online, and you have a recipe for exploitation by hackers and other malicious actors.

In 2016, Russian hackers showed us the damage that could be done by leveraging tools like AI to influence voters through Facebook ads. The potential for AI-generated messages to sway the opinions of key lawmakers on crucial issues is even more alarming.

The consequences of AI-generated content are far-reaching. Campaigns could potentially manipulate public sentiment by authoring and sending messages on behalf of individuals. Although legitimate campaigns wouldn't do this, third-party contractors and the tools they use might. Detecting such manipulation is extremely challenging. Furthermore, an onslaught of AI-generated messages could lead lawmakers and their staff to view constituent communication as less valid or valuable, ultimately undermining democracy.

The Solution

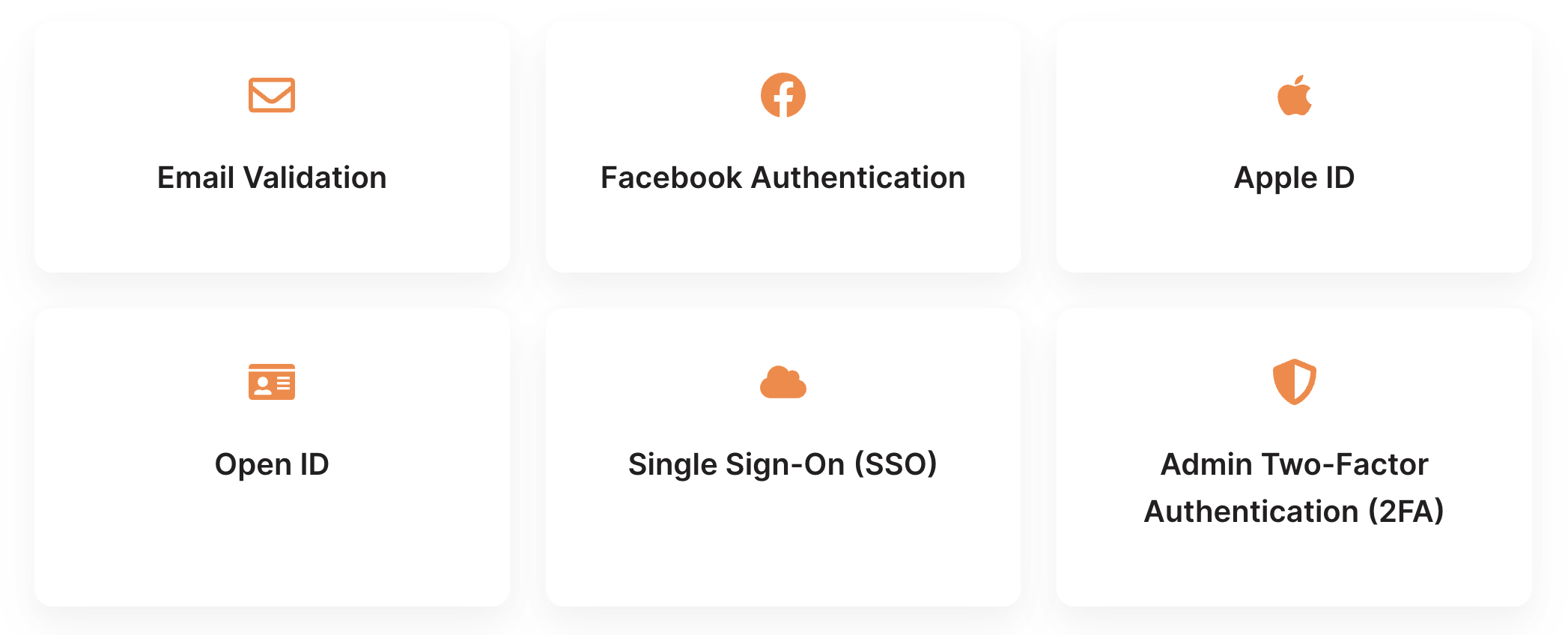

To tackle this issue, we must make changes in our approach and technology. The most critical step is to authenticate a person's identity, ensuring that messages sent are from real people. While there are ethical concerns surrounding access to technology and the need to ensure everyone has a voice, the risk posed by deepfake technology is too great to ignore.

Various methods for authenticating identity are already in use, such as two-factor authentication, e-verify, and Global ID. These approaches need to be leveraged and expanded upon for lawmaker and advocacy messaging. Though the process may be cumbersome, it is necessary to restore trust in constituent correspondence.

Countable is already offering layers of functionality within its platform to build trust in constituent correspondence, such as user history and authenticated scores. The challenge now is to implement similar systems at all levels of digital constituent engagement, ensuring that messages come from real Americans with real identities.

Conclusion

The conversation about AI's influence on grassroots advocacy and democracy is just beginning. The rapid development of AI technologies demands a swift response to mitigate the risks posed by bad actors who may already be exploiting these capabilities. By focusing on identity authentication and trust-building, we can work towards restoring faith in constituent communication and preserving the integrity of our democratic processes.

Share this

You May Also Like

These Related Stories

-2.png?width=1920&height=1080&name=Slide%2016_9%20-%207%20(4)-2.png)

Achieving Justice and Equity in the Era of AI

The Threat of Generative AI to Constituent Communication, Trust, and National Security

.jpeg?width=2121&height=1110&name=iStock-1385906460%20(1).jpeg)